Docker vs. Vagrant - Help me decide

A few years ago, virtual machine tools like [Vagrant](https://stackshare.io/vagrant) were one of the only ways to set up a replicable, isolated environment for local software development. Since around 2014 though, container platforms - [Docker](https://stackshare.io/docker) being the most notable - have taken off in popularity and search volume.

Search volume of "Docker" and "Vagrant" over the past five years.

While search volume for "Docker" has overtaken "Vagrant", there are still legitimate reasons to use each of them. Each offers its own pros and cons when it comes to initial setup, sharing configurations, resource utilization, and production use.

Setup Steps

Because both Vagrant and Docker are relatively mature technologies, each has its own downloadable installer and documentation. The only prerequisite is the ability to use the command line on your operating system. Note that running both Vagrant and Docker at the same time is possible, but not advised as they can each use considerable system resources. Let's compare the setup process for each of these two tools.

Running Your First Vagrant Box

Vagrant is designed to make configuring and sharing virtual machines easier. Because it does not provide its own virtual machine, it must be used in conjunction with a tool like VirtualBox. Installing both Vagrant and VirtualBox is usually straightforward though as Vagrant will automatically detect your installation of VirtualBox and configure itself accordingly.

In order to get Vagrant running:

- Download and install the version of VirtualBox required for your operating system.

- Download and install the version of Vagrant required for your operating system.

- Download and initialize a Vagrant box using the command line:

vagrant init hashicorp/precise64.

- Vagrant boxes are similar to Docker images, but they provide a configuration for the entire virtual machine, and not just a single process as in the case of Docker.

- Run the Vagrant box:

vagrant up.

- If you haven't run this box before, Vagrant will need to download it. This could take a few minutes, and unfortunately will need to be done each time you use a new Vagrant box.

Now you should be running a Linux virtual machine with Vagrant on your host machine. For more detailed instructions, refer to Vagrant's getting started documentation.

Running Your Docker Container

While Vagrant allows you to provision a virtual machine that emulates an entire Linux system, Docker enables you to run individual containers (or networks of containers) that each share the same underlying kernel. Unlike Vagrant, Docker comes with its own virtual machine provider on Mac and Windows, while on Linux, Docker uses the host system's kernel.

Setting up Docker and running your first container is just a few steps as well:

- Choose and download the version of Docker Community Edition that's right for your operating system.

- Run the installation steps provided to get the GUI and command line tools installed. For example, on Mac, you drag the Docker whale to your

Applications directory and open it.

- Open your terminal and type

docker pull ubuntu to download an Ubuntu Docker image.

- This step may take a few minutes depending on your connection speed, but Docker will "remember" this image, and any future Docker images that build on it will be quicker to download.

- Run a container with an active terminal session:

docker run -it ubuntu.

You should now have terminal access to a running Ubuntu container. During a typical development workflow, you will probably need to use other docker run command options, so be sure to read the docs for more information.

Sharing Configurations

One of the problems that both Docker and Vagrant set out to solve is that of inconsistent environments between team members or remote machines.

Vagrantfiles

With Vagrant, you can create a Vagrantfile, and check it into version control to share it with anyone who clones your project. This way, new developers who join your team should be able to download the project files, run vagrant up, and get right to work. You can even set Vagrant to create multiple virtual machines at once using the multi-machine feature. While this can be quite resource intensive, it does allow you to more accurately replicate a production environment.

This method of sharing configuration is great for resetting your local or server environment to a known state, you may want to keep some of its drawbacks in mind:

- Any developer who makes an environment change has to make those changes to the Vagrantfile as well. If they forget, other developers will not see the change.

- Some changes may be difficult to set up, especially if dependencies are chained. For example, if you need to install a new version of your database software, you may have to update drivers and other software that relies on that database.

- Starting up complex Vagrant environments may take several minutes. If your project requires developers to start and stop their environment frequently (eg: a continuous integration server), Vagrant may slow them down.

Docker Images

Because Docker containers don't necessarily describe the entire system, but rather a single process, the method for sharing container configurations varies. You may need to share one or more of the artifacts below in order to provide other developers with a replicable environment.

Docker Images are pre-packaged software with all dependencies, code, and external libraries included. So if you want to share a complete working application with another developer or server but they don't need to edit the code, this may be the best method. This is the most common way to deploy Docker containers to servers or continuous integration environments.

Dockerfiles

Another method for sharing Docker Images is to share the Dockerfile and allow other developers to build their own version of the Docker Image on their machine. Typically you should commit the Dockerfile to version control and then developers can use the docker build command to get their own instance of the image. This method is more flexible than simply sharing the image alone because the Dockerfile outlines all the steps taken to build the image and it can be modified by other developers to update dependencies and prerequisite libraries.

Docker Compose

Both the methods above assume that your application requires only a single process to run. This may be true for simple command line apps or libraries, but in the case of a complex web application, you will probably need multiple processes (eg: database, web server, cache storage). Since Docker containers only run one process at a time, the best way to share a multi-container configuration is using Docker Compose. With compose you can create a single docker-compose.yml file at the root of your project which specifies all the containers needed to get the application running. While typically slower than starting a single container, Docker Compose environments usually take much less time to start than a virtual machine.

Resource Usage

Comparison of starting an Ubuntu container in Docker vs. an Ubuntu virtual machine with Vagrant. Docker takes 0.592s while Vagrant takes 37.9s to start.

Both Docker and Vagrant share memory, hard disk space, and processing power with the host machine that runs them, but the way that they use those resources is very different. This has implications in resource consumption, and can limit the number of running instances on the host.

Because Vagrant uses a virtual machine, each instance has its own dedicated host system resources. This can be good as Vagrant offers a cap on resource usage, but it also means that each virtual machine will always use the maximum amount of RAM and CPU that you give it.

Resource usage is typically one of the strongest arguments for using Docker over Vagrant. Instead of giving each container its own dedicated resources, Docker allows all the running containers to share RAM and CPU with the host system. While you can place a cap on the resources that each container uses, containers typically won't use all of their dedicated resources while idling.

Both Docker and Vagrant can use significant drive space, but Docker has a slight edge. Each Vagrant box is downloaded completely indepenedently of all other boxes, and each may use several gigabytes of hard disk space. If you need to use several different Ubuntu 16.04 boxes (weighing in at 567MB each), you'll use up your hard disk space pretty quickly. Docker, on the other hand re-uses parts of the image that are shared. So, if you use several different images that all extend an Ubuntu image (188 MB each), Docker may only end up using slightly more than 188 MB of hard disk space to store all of them. Because image size is a concern, many developers who use Docker choose to extend the alpine image, which comes in at around 5 MB.

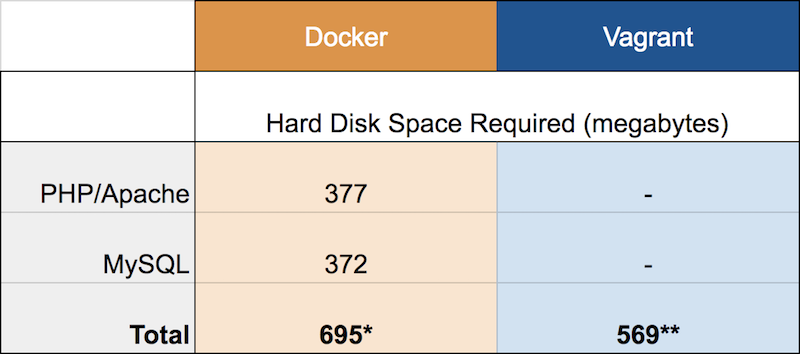

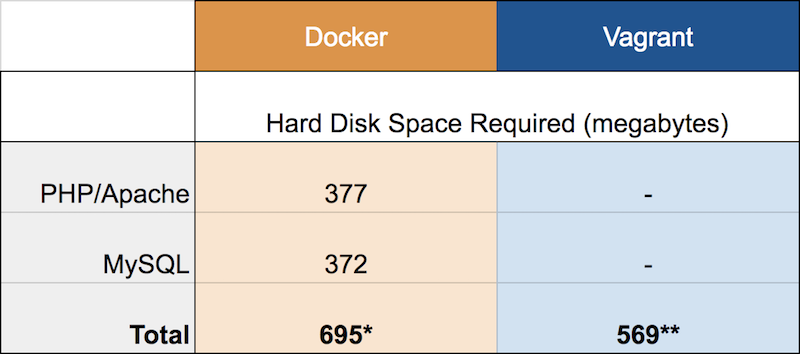

Disk Size of Multiple Ubuntu Images/Boxes on Docker and Vagrant

Docker images do stack up though, so if you're using multiple images that require different Linux distros or vary quite a bit, Vagrant can sometimes have a slight edge.

Disk Size of LAMP (Linux, Apache, MySQL, PHP) Stack on Docker and Vagrant

*Using the official Docker php:apache and mysql:5.7 images. Total considers unique and shared disk usage between images.

**Using Damien Lewis's Ubuntu 16.04 LAMP box.

Despite these advantages in resource utilization for Docker, this doesn't tell the whole story. Unlike Vagrant, Docker requires that the host machine run a Linux kernel. This means that Mac and Windows operating systems must actually run Docker within a virtual machine anyway. So, even though the processes in the virtual machine may be sharing resources, they're sharing the dedicated resources within the virtual machine, much like Vagrant setups would. Another drawback to Docker's reliance on Linux is that other common operating systems like BSD or Windows can't be run in Docker containers.

So, despite the performance benefits of using Docker, it's not always the best choice for system virtualization.

Production Use

Finally, the "holy grail" of virtualization is a single solution for local development, testing environments, and production servers. Both Vagrant and Docker offer production-level solutions, but again because of the fundamental differences in how each technology works, they are quite different in practice.

Vagrant Boxes in Production

In 2015, Hashicorp - Vagrant's parent company - announced a solution for production use of Vagrant boxes called Otto. While that tool has been abandoned, Vagrant does continue to support push, a relatively simple solution that can push updates to your application via FTP, Heroku, or a custom bash script.

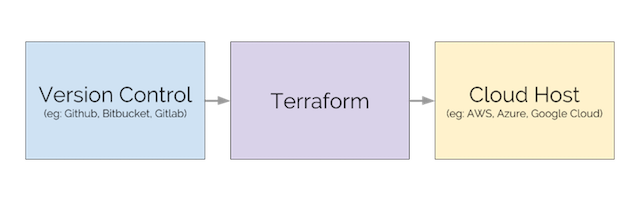

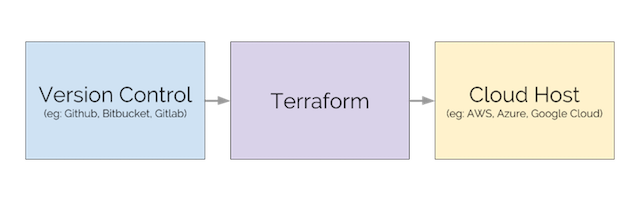

Vagrant is typically reserved for setting up single virtual machines, so unless your production app is very small, you'll probably need a more robust tool to run multiple-server deployments, replication, and redundancy. While it's possible to use Vagrant boxes in production with push, Hashicorp recommends Terraform instead because it can handle replication, scaling, and multiple cloud hosting providers. Hashicorp provides examples of using Terraform on their website.

Docker in Production

Docker also has multiple options for production use depending on your needs. The simplest way to run Docker containers in production is to use a dedicated container hosting platform like Hyper.sh or Azure Container Instances. Both of these platforms come with a command line interface that lets you run containers on their remote hardware with a single command. For example, in the case of Hyper.sh, you can start an Nginx container like this:

hyper run -d -p 80:80 --name test-nginx nginx

hyper fip attach <HYPER IP ADDRESS> test-nginx

The downside to simple container hosting solutions is that they don't scale well for real-world systems. Most web applications will require several networked containers, environmental variables, a database with a host volume, etc. Docker released a solution called Docker Swarm based on Docker Compose that makes it easy to start and stop multiple containers using a single configuration file.

Swarm will work for many production apps, but as you continue to scale across multiple machines, need zero-downtime deployments, and granular secrets management, Kubernetes starts to become a better choice. Kubernetes was developed by Google as a production container orchestration platform, is now officially supported in Docker for Mac and Windows, and has a robust community around it if you ever run into issues.

Docker vs Vagrant Cloud: What are the differences?

Docker: Enterprise Container Platform for High-Velocity Innovation. The Docker Platform is the industry-leading container platform for continuous, high-velocity innovation, enabling organizations to seamlessly build and share any application — from legacy to what comes next — and securely run them anywhere; Vagrant Cloud: Share, discover, and create Vagrant environments. Vagrant Cloud pairs with Vagrant to enable access, insight and collaboration across teams, as well as to bring exposure to community contributions and development environments.

Docker and Vagrant Cloud can be categorized as "Virtual Machine Platforms & Containers" tools.

Some of the features offered by Docker are:

- Integrated developer tools

- open, portable images

- shareable, reusable apps

On the other hand, Vagrant Cloud provides the following key features:

- Vagrant Share: A single command to share your local Vagrant environment to anyone in the world

- Box Distribution: Vagrant integration provides flexible versioning and support for private or community boxes

- Discover Boxes: Start new projects faster using the right box. Find trusted and top-used community boxes

Docker is an open source tool with 53.8K GitHub stars and 15.5K GitHub forks. Here's a link to Docker's open source repository on GitHub.

By

By